Invented by SOHUM; Anuj Khanna, FOONG; Charles Yong Jien, RAMAKRISHNA; Madhusudana, Affle (India) Limited, India

Artificial Intelligence (AI) has changed how we solve problems. Today, AI agents can work together and get smarter over time. But how do we keep these agents secure, make sure they can adapt, and keep their learning safe? A recent patent application tackles this question in a simple, strong way. Let’s break it down so you can really understand what’s new, why it matters, and how it all works.

Background and Market Context

AI agents are programs that can learn, reason, and act on their own. They help in many places—hospitals, traffic systems, online shops, even in your phone. Most AI agents are built for one job: a car’s AI keeps it in the lane, a shop’s AI suggests what to buy, a hospital’s AI helps doctors pick medicines. These agents are smart, but they don’t always work well together. Each one is stuck in its own bubble. If you want to use them for something new, you often have to start from scratch. That’s slow, wasteful, and expensive.

Now, think about a big city with hundreds of cars, buses, and trains, all trying to get people where they need to go. Or a hospital where different AI programs help with patient care, billing, and scheduling. If these AI agents could talk to each other, share what they know, and get smarter from these talks, things would work much better. But there are problems. What if an AI agent makes a mistake? What if someone steals the data? What if old agents keep using up resources even when they’re not useful anymore?

The market is hungry for a way to make AI agents work together, safely and smartly, without putting sensitive data at risk. Companies want to use AI in more ways, but rules about privacy and the risk of leaks hold them back. The world needs a method to let AI agents help each other and keep learning, all inside a safe space.

This is where the idea of a “secure cloud-based enclave” comes in. It is like a strong room where AI agents can meet, talk, and learn, but nothing private ever leaves the room. Inside, they can share ideas, try new things, and even bring in new agents or retire the old ones without losing what they’ve learned. This opens the door for better teamwork among AI, faster upgrades, and less waste.

The market for this is huge, from cities and hospitals to banks and online stores. Everywhere people want smart help, but they also want to keep their secrets safe. This patent aims to solve those needs in a careful and clever way.

Scientific Rationale and Prior Art

Before this new idea, most AI systems were built for one job at a time. If you wanted a new job done, you had to build a new agent or change the old one a lot. Some systems let agents share data, but this was risky. Data could leak, mistakes could happen, and it was hard to check who did what. Logs were kept, but they were often simple—a list of actions, not a full story. If you wanted to know why an AI made a choice, or how it learned, you had to guess.

There are also special ways to teach AI to learn from one job and use it for another. This is called “transfer learning.” It helps a little, but it is not enough for a team of agents who need to work together in real time. When AI agents work in health care or with private data, security is a big worry. Past systems could not keep the data inside a safe bubble. Agents could not learn from each other without exposing private information. And when an agent was old or not needed, its knowledge could be lost, or worse, left behind and open to attack.

Some companies built “enclaves” or safe rooms in the cloud. These keep data safe from the outside. But within those rooms, AI agents still worked alone, and logs were not rich enough to help new agents learn in detail. If you tried to bring in new agents or models, it was hard to test them without risk. Removing old agents was messy and left gaps in learning.

This patent brings all the missing parts together. It suggests a way for AI agents to work as a team, inside a safe cloud room, where every talk, every change, and every lesson is logged in detail. These logs are not just for looking back—they are used to train new agents, test new models, and keep everything clear and safe. The system can even compare two agents doing the same job, pick the better one, and cleanly retire the old one without losing its knowledge.

No other system does all this at once: logs every step, lets agents adapt and learn from each other, keeps all data safe, and manages the full life of each agent from start to finish. That is what makes this invention different from all the rest.

Invention Description and Key Innovations

Let’s walk through how this new system works, in simple terms.

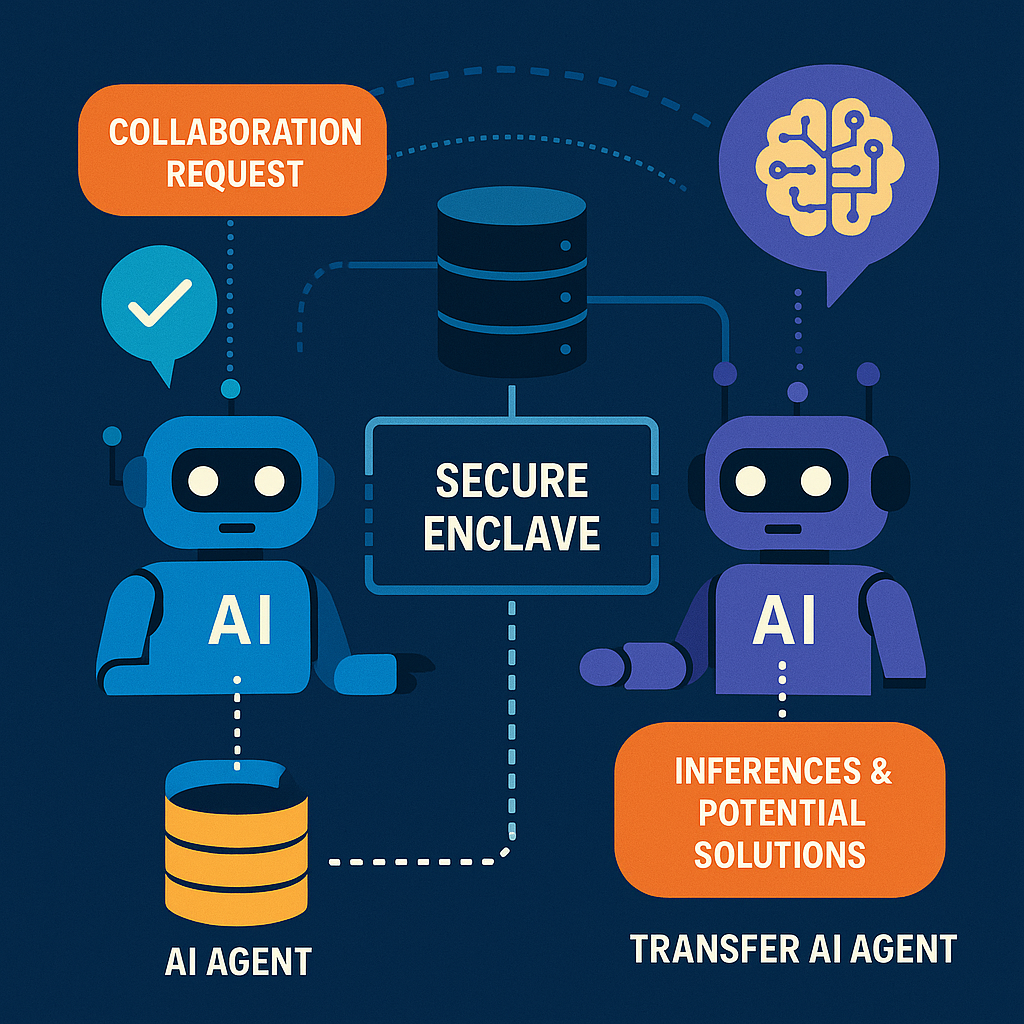

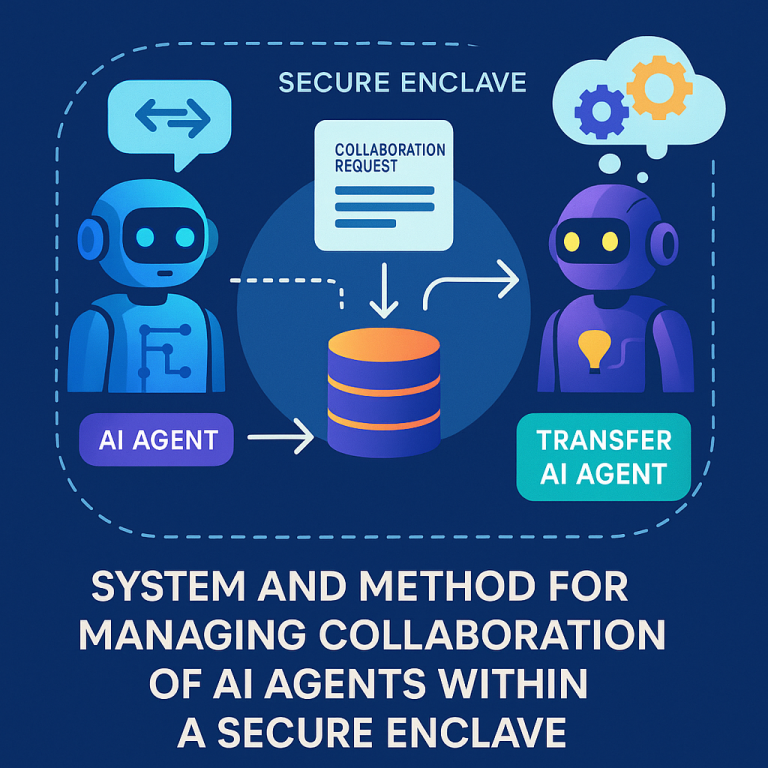

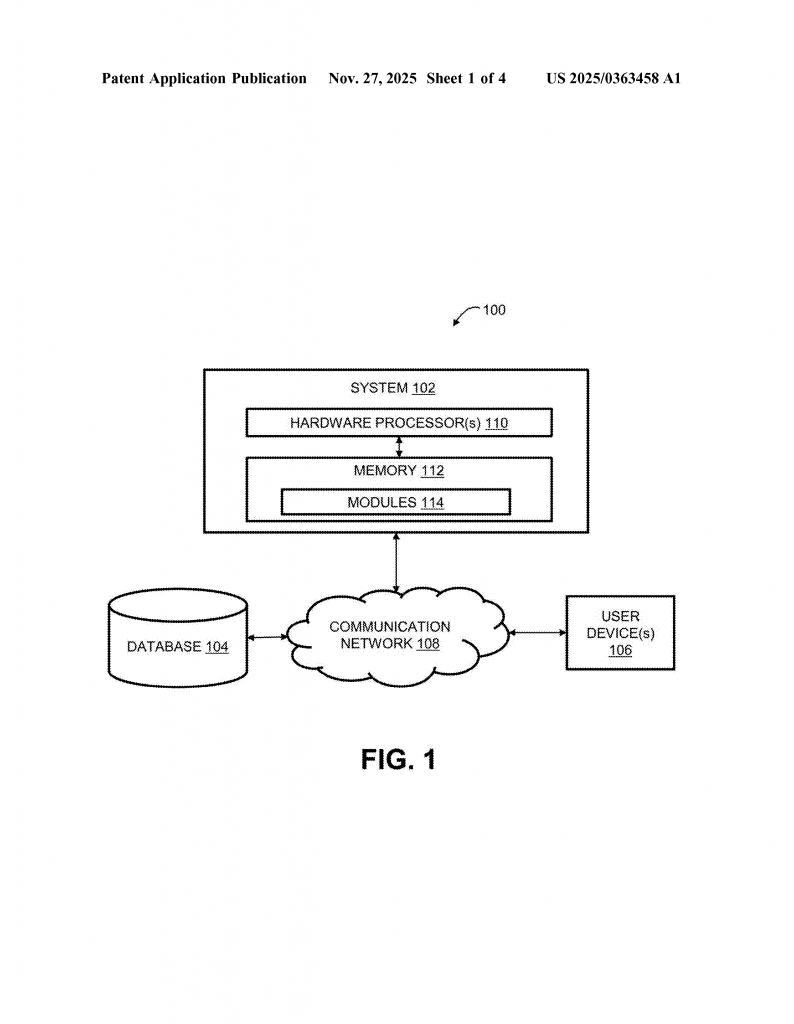

Imagine a big, locked room in the cloud. This room is called the “secure cloud-based enclave.” Only trusted AI agents are allowed inside. Each agent has its own job and skills. When one agent needs help—for example, a hospital’s AI needs a second opinion—it sends a message called a “collaboration request” to another agent inside the room.

The system does something special. The agent who asks for help writes down everything: who is asking, what they want, what they know so far, and the time. This goes into a special database inside the enclave. Nothing leaves the room. It is like keeping a diary, but much more detailed. This log is not just a list—it records every fact, every idea, even feelings like confidence levels. If the agent gets feedback from a user, like a doctor saying “yes, that’s right” or “no, try this,” that is logged too.

The agent who gets the request—the “transfer agent”—now adapts itself. It checks what it knows, changes its settings if needed, and makes a plan. All these steps are logged. The agent then thinks about the problem, using its own AI model, and comes up with answers or ideas. It sends these back, and again, everything is written down.

If a user, like a doctor or city worker, gives feedback, the agent learns from it. The agent can change its answers based on this new input. The system even lets agents look back at old logs, learning from what worked and what didn’t. This helps them get better over time, without ever needing to leave the safe room or risk data leaks.

The system supports upgrades in a smart way. If a new AI model is ready, it can run side by side with the old one. The system keeps track of which one does better, based on real feedback and results. When the new one is proven to be better, it gets promoted. The old one is retired, but before it goes, all its special knowledge is saved and put into the logs for future use. This means nothing is lost, and the system keeps getting smarter.

Another smart part is how the system keeps resources safe. If an agent is not needed anymore, the system notices. It saves what is important, shuts down the agent, and clears out any left-over data. This keeps the enclave tidy and safe from attacks.

The system can also control what each agent can do and for how long. If an agent is given a special skill for a limited time—like a celebrity AI doing ads—it only gets that skill during the campaign. After that, the skill is taken away and any related knowledge is safely stored or erased.

This method is flexible. It can even transfer only parts of an agent, like its voice, style, or way of talking. This helps make agents that feel more human or match a brand’s style, but only when and where it’s allowed.

All the while, privacy rules and safety checks are built into the system code. If the rules change, the enclave updates itself in real time. This keeps everything safe and fair, even as the agents and their jobs change.

In summary, the big innovations here are:

— Keeping all talks and actions inside a safe, locked room in the cloud.

— Logging every step in great detail, with facts, ideas, feelings, and feedback.

— Using these logs to train new agents, test upgrades, and keep learning.

— Letting agents adapt, grow, and share skills, but only in safe, controlled ways.

— Smartly retiring old agents and skills without losing what they learned.

— Building privacy and rules into the core, so safety is never an afterthought.

This approach makes AI teamwork possible in places where privacy and safety matter most. It helps companies and cities use AI in new ways, without fear of leaks or mistakes, while always getting better over time.

Conclusion

This patent application introduces a simple but powerful way for AI agents to work together, learn, and evolve—all inside a secure, private space in the cloud. By logging every detail, learning from feedback, testing new ideas safely, and keeping everything under strict control, this system solves many old problems. It makes AI safer, more useful, and ready for the real world where privacy and teamwork matter most. As AI becomes a bigger part of our lives, inventions like this will help us trust and use AI in new, better ways.

Click here https://ppubs.uspto.gov/pubwebapp/ and search 20250363458.