Invented by Carol L. Novak, Benjamin L. Odry, Atilla Peter Kiraly, Jiancong Wang, Siemens Healthcare GmbH

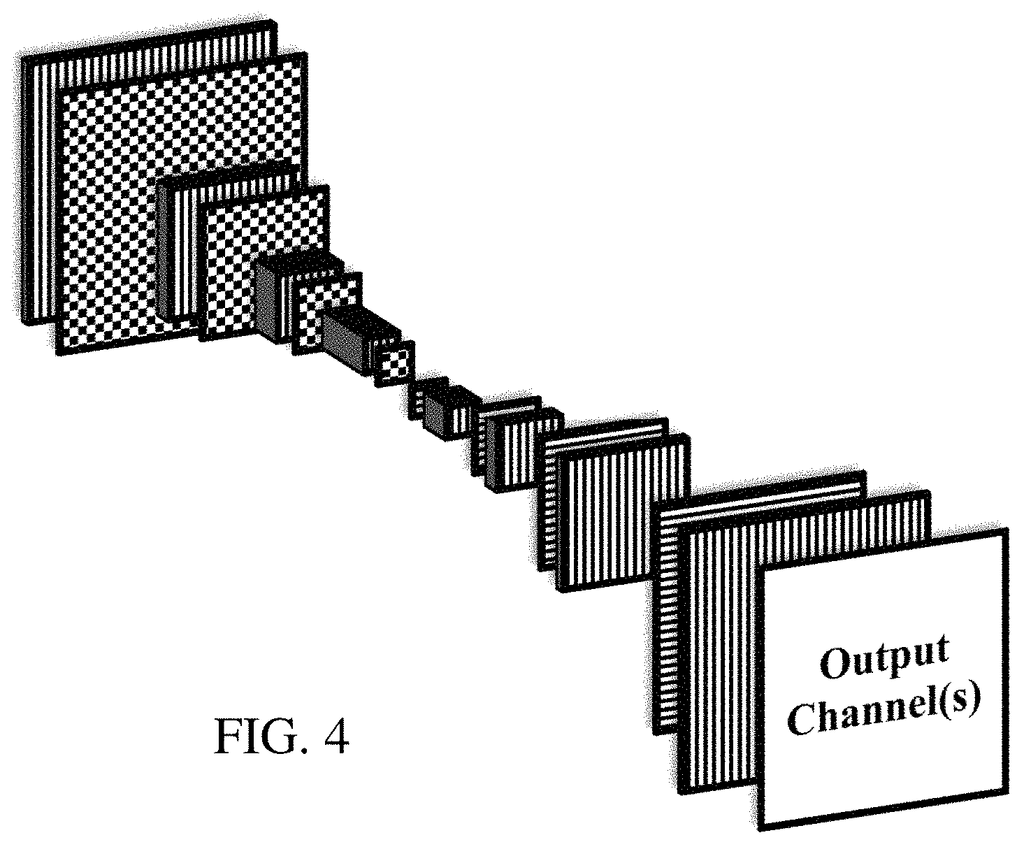

Traditionally, lung segmentation has been performed manually by radiologists, which is a time-consuming and subjective process. However, with the advancements in deep learning and computer vision techniques, automated lung segmentation using generative networks has emerged as a promising solution. Generative networks, such as convolutional neural networks (CNNs) and U-Net architectures, have shown remarkable performance in segmenting lung regions from medical images with high accuracy and efficiency.

The market for a trained generative network for lung segmentation is driven by several factors. Firstly, the increasing prevalence of lung-related diseases, such as lung cancer, chronic obstructive pulmonary disease (COPD), and pneumonia, has created a need for efficient and reliable lung segmentation techniques. Accurate segmentation of lung regions enables early detection, precise diagnosis, and effective treatment planning for these diseases.

Secondly, the growing adoption of digital medical imaging systems, such as computed tomography (CT) and magnetic resonance imaging (MRI), has generated a massive amount of medical image data. Manual segmentation of lung regions from such large datasets is impractical and time-consuming. Therefore, the demand for automated lung segmentation solutions, powered by trained generative networks, has surged.

Moreover, the advancements in deep learning algorithms and hardware acceleration technologies have significantly improved the performance and speed of generative networks. This has further fueled the market growth for trained generative networks for lung segmentation. The ability of these networks to learn from large datasets and generalize well to unseen images makes them highly valuable in medical imaging applications.

Furthermore, the market for trained generative networks for lung segmentation is also driven by the increasing investments in healthcare AI and machine learning research. Governments, healthcare organizations, and private companies are investing heavily in developing AI-powered solutions for improving healthcare outcomes. Lung segmentation using trained generative networks is a prime example of such solutions, as it can enhance the accuracy and efficiency of lung-related disease diagnosis and treatment.

In conclusion, the market for a trained generative network for lung segmentation using medical images is witnessing significant growth due to the increasing demand for accurate and efficient lung segmentation techniques. The ability of generative networks to automate the segmentation process, improve diagnostic accuracy, and enhance treatment planning has made them highly valuable in the field of medical imaging. With further advancements in deep learning algorithms and hardware acceleration technologies, the market for trained generative networks for lung segmentation is expected to expand even further in the coming years.

The Siemens Healthcare GmbH invention works as follows

A generative net is used to segment lung lobes or to localize lung fissures, or train a machine network that can segment or locate lobars. Deep learning can be used for segmentation to deal with sparse training data. Image-to-image networks or generative networks can localize fissures to increase the training data. “The deep-learnt networks, fissure location, or other segmentation can benefit from generative fissure localization.

Background for A trained generative network for lung segmentation using medical images

The present embodiments are related to automated lung-lobe segmentation. Radiologist reports and treatment plans are dependent on knowing the lobar boundaries. Knowing the lobar region, for example, is helpful in automating reporting and planning intervention or treatment if an area of lung disease has been detected. The completeness of the fissure that separates lobar regions can also be used to determine the success of certain lobectomy techniques. Fissure areas may be helpful when performing deformable registration in volume across time points.

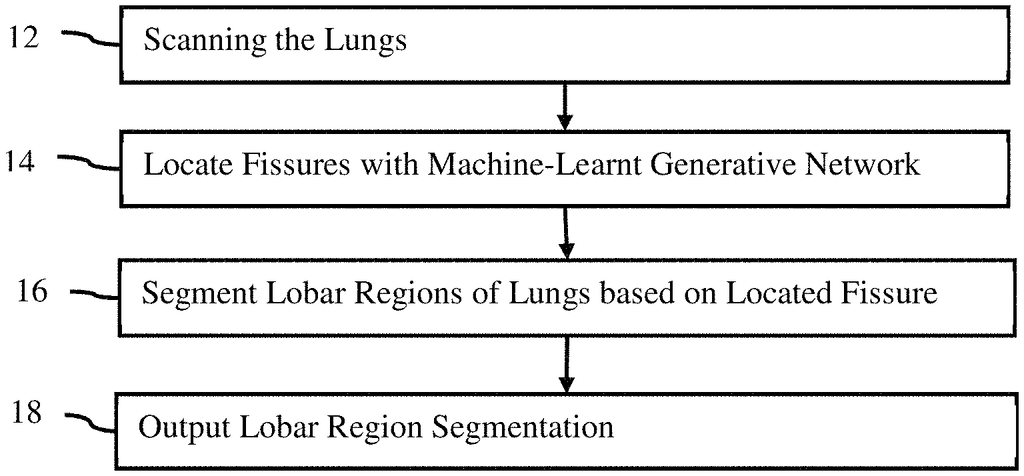

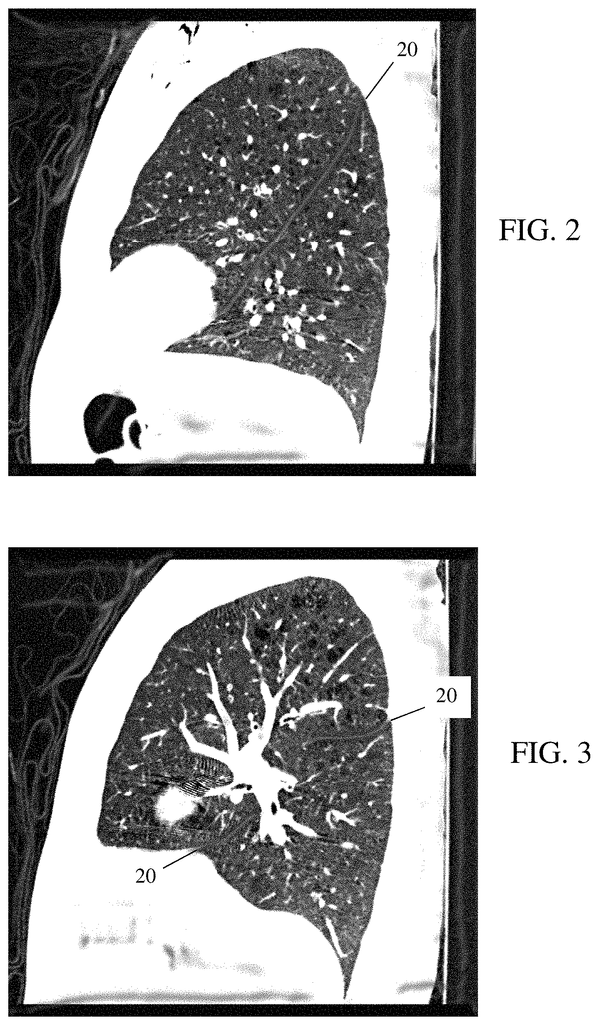

The fissures that separate the lung into lobar areas appear as thin, relatively intense lines on computed tomography images. Fissures can vary in thickness, and in some cases they may even be missing or incomplete. Computers have a hard time segmenting the lungs accurately due to their wide range of anatomy and artifacts. There are many difficulties, including: incomplete or missing fissures that were either too faint to be seen by the resolution of the image or missing entirely from the patient in question; and image artifacts like noise or movement. Automated lobe segmentation is often aided by the absence of vessels around the fissures. However, the presence of vessels that cross fissure boundaries can present additional challenges for automated methods.

Previous methods of automated fissure or lobar segmentation may have difficulty dealing with variations in fissure completion and vessel crossings. Machine-learning based on values from the Hessian matrices has been proposed as a method for lobar segmentation. However, it is difficult to obtain enough training data. The annotation of fissures in a CT volume can be a tedious and difficult task. Due to the experience and knowledge required to identify fissures, as well as the wide variety of appearances they have, the number users who are able annotate images is limited. “Even with machine learning, anatomical variation can still cause problems.

As an introduction, the preferred embodiments are described below. They include methods, computer-readable media, and system for lung lobe or lung fissure segmentation, or for localization or training a machine networks for segmentation. Deep learning can be used for segmentation to deal with sparse training data. Image-to-image networks or generative networks can localize fissures to increase the training data. “The deep-learnt networks, fissure location, or other segmentation can benefit from generative fissure localization.

In a first aspect, it is possible to segment lung lobes in a medical image system. A medical scanner scans lungs to provide first imaging data that represent the lungs. Image processing software applies a machine learned generative network to first imaging data. The machine-learnt network is then trained to produce second imaging data that includes labeled fissures. Image processors segment the lung lobar regions represented by the first image data using the labeled fissures. “An image of the lobar region of the lung of the patient appears on the display.

In a second aspect, there is a system for lung fissure location. The lungs of a person are scanned by a medical scanner, which produces image data that represents the lungs. A machine-learnt network of image-to image connections is used to find the fissures within the lung represented by the image data. “A display is configured to show an image as a result of the fissures located.

In the third aspect, it is described a method for training a machine for segmenting lung lobes. The invention receives manual annotations of fissures on a subset of slices in a volume that represents the lungs. On a second set of slices from the volume, a fissure filter can be applied. The fissure filter identifies the locations of fissures on the slices in the second subset. The segmentation classifier can be trained to segment lobar regions using the location of fissures on the slices in the second subset and the annotations for the fissures from the first subset. The segmentation classifier trained is then stored.

The following claims define the invention. Nothing in this section should be construed as limiting those claims. Additional aspects and benefits of the invention will be discussed below, in conjunction with the preferred embodiments. They may be claimed later independently or together.

A fully convolutional generative network trained for fissure localization segmented the fissure. The network can be trained using a discriminative net in an adversarial procedure. The network can be used to generate the ground truth needed for deep learning of a lung segmentation classifier. The network can be used to locate the fissure in order to segment. The network can be used to show the location of a fissure in planning.

Current approaches to segmenting lung images are difficult and require manual corrections. Lobar segmentation becomes more useful and practical for physicians when they have more reliable or complete segmentation results. This is especially true if the segmentation results are more accurate. While deep learning is promising, it is difficult to apply in a direct and efficient manner if the goal is to detect fissures. Deep learning is more efficient when a generative algorithm is used to locate the fissure. The generative network highlights regions in images for use with lobar segmentation. It is important to detect the fissures in order to determine their completeness. This will affect treatment planning.

The fissure location may be used to generate training data. To deep-learn the lobar segmentation classification classifier, you can use the deep-learnt fissure highlighting generative network instead of annotating every fissure. The deep-learning convolutional architecture or pipeline is used for lobar segmentation and/or fissure localization. “The availability of annotated datasets to train on is a major challenge in developing a deep-learning pipeline for segmentation.

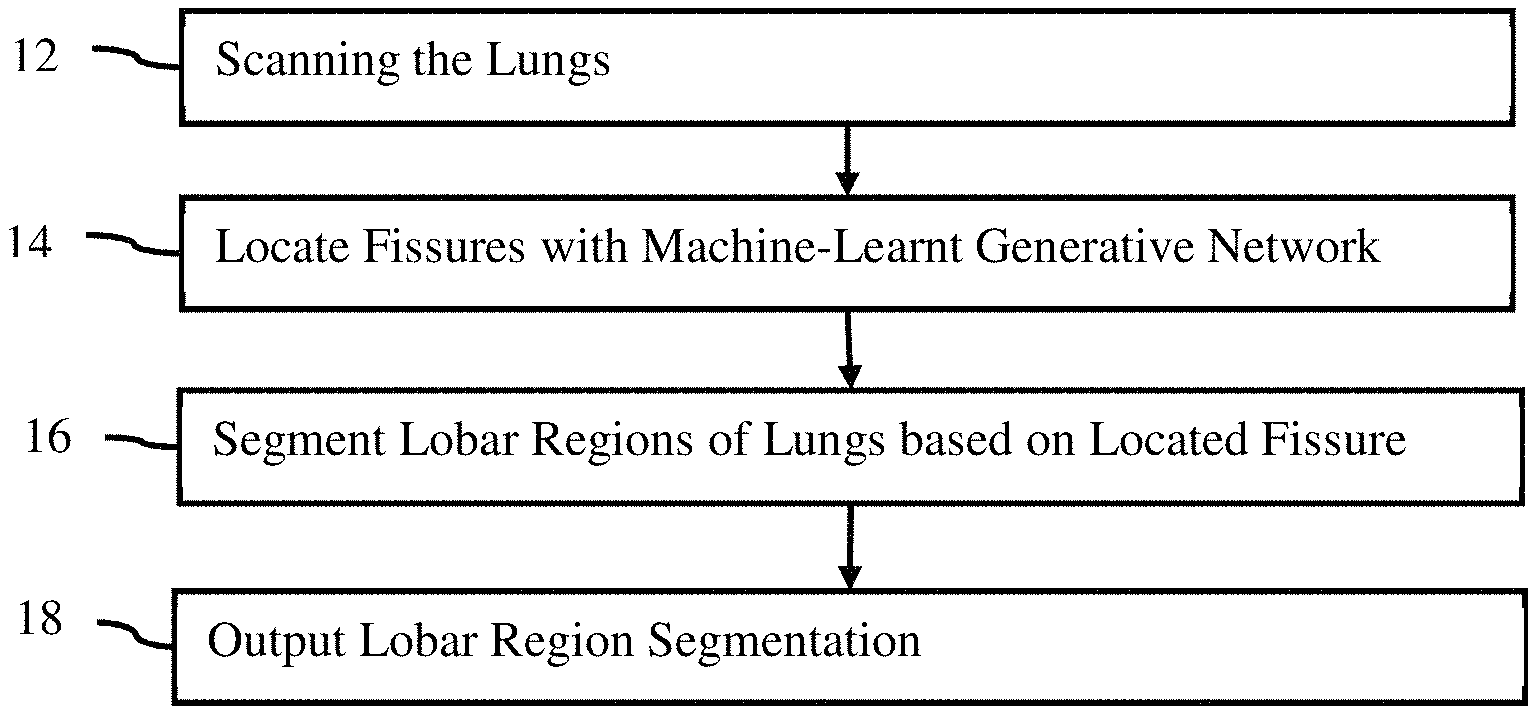

FIG. The method of lung lobes segmentation is shown in Figure 1. A machine-learnt network is used to segment one or more lobar regions in the patient’s lungs using one or multiple fissures. The machine-learnt network can be used in other ways, for example to train a segmentation classification classifier.

The method shown in FIG. The order in which the method is performed (e.g. from top to bottom, numerically) can be different. Acts may be added, changed, or reduced. Act 18 could be omitted, for example. Another example would be to perform acts 12 and 14, but not act 16. Act 18 may show the fissure, rather than a lobar segmentation.

The method can be implemented by any medical imaging system that processes images from medical scans. The medical imaging system can be a medical diagnostic imaging device, review station, workstation, computer, picture archiving communication system (PACS), server, mobile device, combinations of these, or any other image processor. The system described or shown for FIG. The method is implemented by 8 but other systems can be used. “A hardware processor in any system that interacts with memory (e.g. PACS database, cloud storage), display and/or medical scan, can perform the acts.

The acts can be automatically performed. The user scans the patient or retrieves scan data from an earlier scan. The process can be activated by the user. After activation, the fissures will be located, lobar regions segmented and a segmentation picture is outputted to a display. It is possible to avoid the need for the user to enter the location of anatomy within the scan data. The generative network used for fissure location makes it less likely that the user will need to correct the segmentation and/or localization of the fissure. Users may provide input, for example, to change the values of modeling parameters, correct detected locations, or confirm accuracy.

In act 12, the lungs are scanned by a medical scanner. In this case, multiple lungs and fissures may be used. However, a single pulmonary fissure or lung can also be used. Imaging data is generated by the medical scanner. The medical scanner makes the image or imaging data available. The acquisition can also be from memory or storage, for example, a dataset that was previously created from a PACS. The data can be extracted from a medical record database or a picture archive communication system by a processor.

The medical image or imaging data is a volume of 3D data that represents the patient. Data can be in any form. The terms imaging and image are used interchangeably, but the data or image may exist in a different format than the actual image. The medical imaging data, for example, may consist of a number of scalars representing different locations using a Cartesian coordinate format or a polar coordinate format that is different from a display format. Another example is a set of red, green and blue (e.g. RGB) values that are output to an display in order to generate the image. The medical image can be a currently displayed or previously-displayed image on the display, or in another format. Imaging data can be a dataset used to create images, like scan data or an image generated by the patient.

Any type medical imaging data or corresponding medical scanner can be used.” In one embodiment, imaging data comprises a computed-tomography (CT), image acquired using a CT system. A chest CT dataset, for example, can be obtained by scanning the lungs. In CT, raw data is converted into a three dimensional representation. Another example is the acquisition of magnetic resonance (MR), which represents a patient, using an MR system. The data are acquired by using an imaging sequence to scan a patient. The data is obtained in K-space, which represents an interior area of a human. The data is reconstructed using Fourier analysis from the k space into a three dimensional object or image. Data may include ultrasound data. A transducer array and beamformers are used to scan the patient. “The polar coordinates are detected and beamformed to ultrasound data that represents the patient.

The medical imaging data is the tissue or bone structure of a patient. The imaging data for imaging the lungs may include the response from the lung and the anatomy surrounding the lungs (e.g. upper torso). Other embodiments represent both structure and function, for example, nuclear medicine data.

The medical image data represents a region in two- or three-dimensional space of the patient.” The medical imaging data can represent a slice or area of a patient using pixel values. Another example is that the medical imaging data can represent a three-dimensional volume of voxels. The three-dimensional image may be displayed as a stack of planes, slices or two-dimensional planes. For each multiple location distributed in two or more dimensions, values are provided. Medical imaging data are acquired in one or more frames. The data frame represents the scan area at a certain time or period. The dataset can represent an area or volume in time. For example, it could provide a 4D image of the patient.

In act 14 an image processor applies to the imaging data a machine-learned generative network. The machine-learnt model identifies fissures. Some fissures in lung imaging are “inferred” Some fissures are?inferred?, while others can be clearly seen. The ‘inferred’ fissures are those that can be seen in the displayed image but not by others. Fissures can occur when the fissure itself is too thin or the displayed image is so noisy that it is difficult to see the fissure. Inferred fissures can also be seen in patients who have a fissure that is completely or partially missing. Tracing ?inferred? Fissures are useful for segmenting lobars but not to determine fissure completion (i.e. whether the patient in question has a complete fissure). The machine-learned generative network, which is used to localize fissures from imaging data, is trained to detect “visible” fissures. The machine-learned generative network is trained to detect?visible? Fissures can be localized by training the network in this way. In one embodiment, although inferred parts can be localized through training the network this way, only visible fissures will be localized for further image processing to determine a complete lobar sectionation.

Click here to view the patent on Google Patents.